Autoencoders for anomaly detection

Reconstruction-based fault detection with neural networks

What is anomaly detection with autoencoders?

Autoencoders are neural networks trained specifically to learn normal system behaviour and automatically detect deviations.

Typical applications include:

- Monitoring electrical signals

- Fault detection in hydraulic systems

- Condition monitoring of machinery

- Cybersecurity (detecting unusual behaviour)

This approach belongs to unsupervised anomaly detection and is widely used in industry, IoT, and machine learning.

1. Motivation

In technical systems—for example electrical signals, hydraulic plants, or sensor monitoring—a common question is: how do you detect faults when no explicit fault models exist?

An elegant answer is reconstruction-based anomaly detection with autoencoders. A neural network is trained exclusively on normal conditions. As soon as an unknown or faulty condition appears, the reconstruction error rises sharply.

- Unsupervised (no fault labels required)

- Model-free (no physical fault description needed)

- Scales well to many sensor signals

2. Intuitive explanation

An autoencoder learns: “What does normal system behaviour look like?”

For a known condition, the network reconstructs well. For an unknown condition (e.g. sensor fault, drift, defect) the signal can no longer be reproduced cleanly—the error increases.

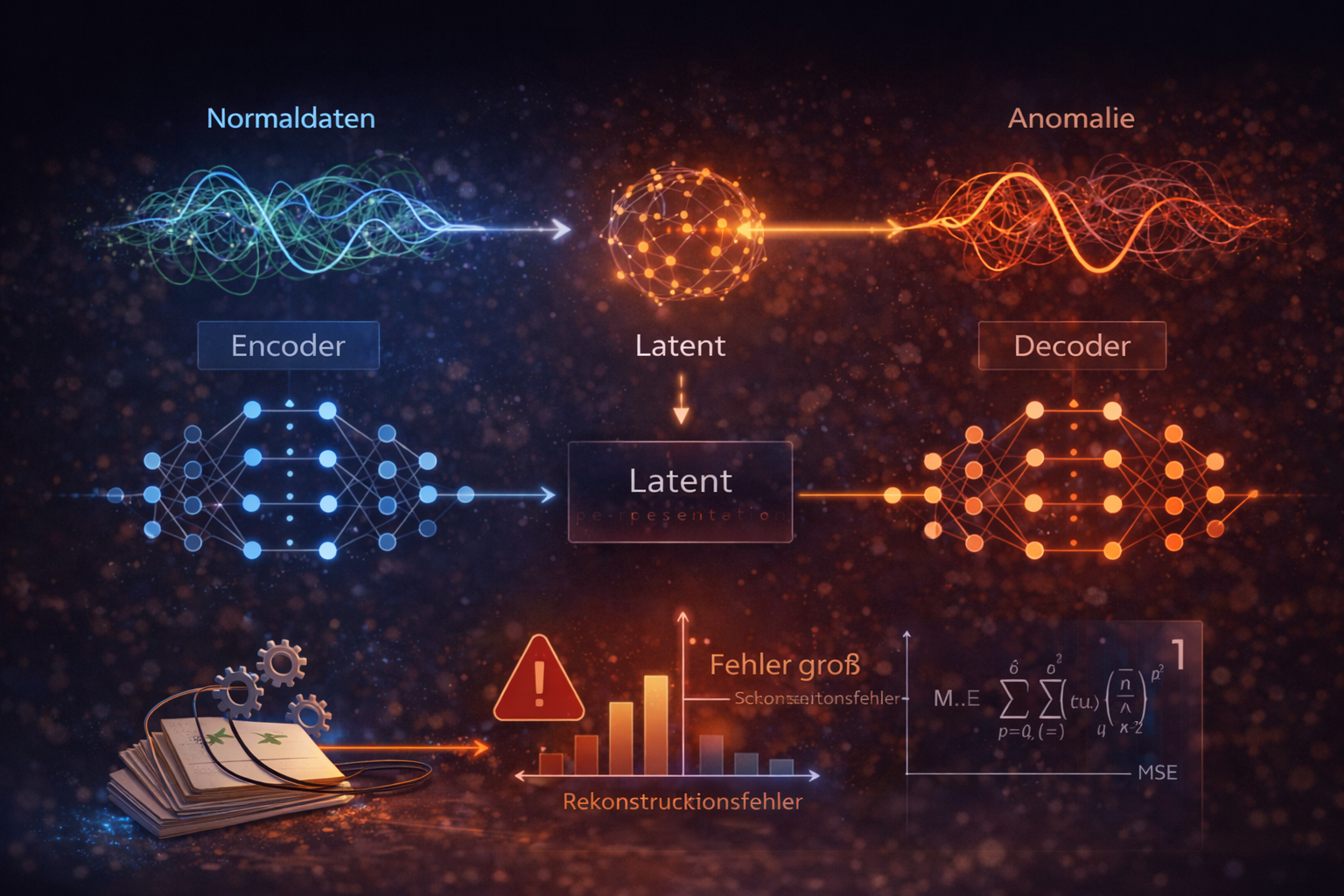

3. Basic principle of an autoencoder

An autoencoder has three parts:

Input \(x\) → encoder → latent space \(z\) → decoder → reconstruction \(\hat{x}\)

Encoder: compresses the input signal, \(z = f(x)\).

Latent space: a compact representation of the most important features.

Decoder: reconstructs the input signal, \(\hat{x} = g(z)\).

Error computation: typically the mean squared error (MSE):

\[\mathrm{Loss} = ||x - \hat{x}||^2\]

If the error exceeds a defined threshold, the state is flagged as an anomaly.

The autoencoder approximates a function: \[ \hat{x} = g(f(x)) \] where \(f\) is the encoder and \(g\) is the decoder.

The objective is: \[ \min ||x - g(f(x))||^2 \]

The latent space acts as an information bottleneck that only lets the most important structures of the signal through.

The latent space can be viewed as a projection onto a low-dimensional manifold. Normal data lie close to this structure, while anomalies lie outside it.

4. Small worked example

Consider a simplified 2D sensor system \(x = (x_1, x_2)\) with the normal assumption \(x_2 \approx x_1\).

Linear mini-autoencoder:

\[ z = 0.5x_1 + 0.5x_2,\quad \hat{x}_1 = z,\quad \hat{x}_2 = z \]

Normal case: \(x = (2, 2.1)\)

\[ z = 2.05,\quad \hat{x} = (2.05, 2.05),\quad e = (-0.05, 0.05),\quad \mathrm{MSE} \approx 0.005 \]

Very small → normal condition.

Fault case: \(x = (2, 5)\)

\[ z = 3.5,\quad \hat{x} = (3.5, 3.5),\quad e = (-1.5, 1.5),\quad \mathrm{MSE} = 4.5 \]

Much higher → anomaly.

5. Practical use (signal monitoring)

In technical systems, such as monitoring sensors or electrical signals, an autoencoder can continuously learn the normal condition.

Typical faults that can be detected:

- Sensor drift

- Noise outside the normal range

- Sudden signal changes

- Hardware defects

6. Python implementation (PyTorch)

The following minimal example trains an autoencoder only on normal data and then compares the error for a normal sample and a faulty sample.

import torch

import torch.nn as nn

import torch.optim as optim

torch.manual_seed(0)

# 1) Training data (normal condition): x2 ~ x1 + small noise

n_samples = 1000

x1 = torch.randn(n_samples, 1)

x2 = x1 + 0.1 * torch.randn(n_samples, 1)

data = torch.cat((x1, x2), dim=1)

# 2) Autoencoder

class Autoencoder(nn.Module):

def __init__(self):

super().__init__()

self.encoder = nn.Sequential(

nn.Linear(2, 4),

nn.ReLU(),

nn.Linear(4, 1)

)

self.decoder = nn.Sequential(

nn.Linear(1, 4),

nn.ReLU(),

nn.Linear(4, 2)

)

def forward(self, x):

z = self.encoder(x)

x_hat = self.decoder(z)

return x_hat

model = Autoencoder()

criterion = nn.MSELoss()

optimizer = optim.Adam(model.parameters(), lr=0.01)

# 3) Training

for _ in range(200):

optimizer.zero_grad()

output = model(data)

loss = criterion(output, data)

loss.backward()

optimizer.step()

# 4) Test: normal condition vs. fault condition

normal_sample = torch.tensor([[2.0, 2.1]])

anomaly_sample = torch.tensor([[2.0, 5.0]])

error_normal = criterion(model(normal_sample), normal_sample)

error_anomaly = criterion(model(anomaly_sample), anomaly_sample)

print("Normal Error:", error_normal.item())

print("Anomaly Error:", error_anomaly.item())7. Threshold selection

Choosing the threshold is critical: a value that is too low causes many false alarms, a value that is too high misses real faults.

In practice the threshold is often tuned using validation data.

\[ \mathrm{Threshold} = \mu + 3\sigma \]

All error values above this are classified as anomalies.

8. Autoencoders in practice: training in Python, execution on the PLC

In real automation systems an autoencoder is usually not trained directly on the PLC. Training is done offline in Python, for example with PyTorch, where optimisation methods such as backpropagation and Adam and large training sets are available.

After training, only the learned model parameters are transferred:

- encoder weights and bias values

- decoder weights and bias values

- the chosen activation function

On the PLC only inference runs afterwards. That means: the current feature vector passes through the encoder and decoder, the signal is reconstructed, and the reconstruction error is computed as the anomaly score.

This workflow is particularly attractive for industrial applications because the PLC only needs to perform a few arithmetic operations while still using a trained neural model.

FUNCTION_BLOCK Autoencoder_5_3_5

VAR_INPUT

enable : BOOL;

(* Feature vector *)

x1 : REAL;

x2 : REAL;

x3 : REAL;

x4 : REAL;

x5 : REAL;

threshold : REAL := 0.05;

END_VAR

VAR_OUTPUT

valid : BOOL;

anomaly : BOOL;

mse : REAL;

h1 : REAL; h2 : REAL; h3 : REAL;

x1_hat : REAL;

x2_hat : REAL;

x3_hat : REAL;

x4_hat : REAL;

x5_hat : REAL;

END_VAR

VAR

x : ARRAY[0..4] OF REAL;

h : ARRAY[0..2] OF REAL;

x_hat : ARRAY[0..4] OF REAL;

z : REAL;

err : REAL;

i, j : INT;

END_VAR

(* Initialization *)

valid := FALSE;

anomaly := FALSE;

mse := 0.0;

IF enable THEN

x[0] := x1;

x[1] := x2;

x[2] := x3;

x[3] := x4;

x[4] := x5;

(* ENCODER *)

FOR j := 0 TO 2 DO

z := 0.0;

FOR i := 0 TO 4 DO

z := z + x[i];

END_FOR

h[j] := TANH(z);

END_FOR

(* DECODER *)

FOR i := 0 TO 4 DO

z := 0.0;

FOR j := 0 TO 2 DO

z := z + h[j];

END_FOR

x_hat[i] := z;

END_FOR

(* Error computation *)

err := 0.0;

FOR i := 0 TO 4 DO

err := err + (x[i] - x_hat[i]) * (x[i] - x_hat[i]);

END_FOR

mse := err / 5.0;

anomaly := (mse > threshold);

valid := TRUE;

END_IF

Structure of the Structured Text example

- Inputs: five normalized features from the process

- Encoder: linear combination followed by tanh activation

- Latent space: compact representation in three variables

- Decoder: reconstruction of the original feature vector

- Error analysis: computation of the mean squared error (MSE)

- Decision: comparison with a threshold for anomaly detection

9. Strengths and limitations

Strengths:

- No fault models required

- Can detect unknown faults

- Suitable for high-dimensional sensor data

- Unsupervised learning

Limitations:

- Threshold choice is critical

- Representative normal data are essential

- Drift can degrade detection quality

10. Conclusion

Anomaly detection with autoencoders is a powerful, flexible approach for technical systems. The core idea is: compression → reconstruction → error analysis.

Especially with many sensor signals, complex relationships can be modeled without defining explicit fault classes.

Author: Ruedi von Kryentech

Created: 6 Apr 2026 · Last updated: 6 Apr 2026

Technical content as of the last update.