Quantum computer security: Post-quantum cryptography for developers

Technical guide to post-quantum cryptography and pragmatic PQC migration in real systems

What you will learn in this article

You will understand why quantum computer security is an architecture topic today, not only a research topic.

You will learn the essentials of qubits, Shor, Grover, and the consequences for RSA, ECC, AES, and hash functions.

You will get a practical overview of post-quantum cryptography, including algorithm choice and trade-offs.

You will receive a concrete PQC migration guideline with checklist, code examples, and integration points.

Table of contents

1) Why developers must act now

2) Basics: What makes quantum computers dangerous?

3) Why Shor’s algorithm is so dangerous

4) Putting the time horizon in perspective

5) Post-quantum cryptography: standards and selection

6) What does this mean for classical schemes?

7) Complexity comparison: classical vs. quantum

8) Why lattice-based cryptography is considered quantum-resistant

9) Concrete attack scenarios with quantum computers

10) Performance and trade-offs of post-quantum cryptography

11) Threat model: who attacks you and what is realistic?

12) Practical implementation: from theory to prototype

Why developers must act now

Classical public-key schemes such as RSA and ECC can be broken in the long run by quantum algorithms. Anyone encrypting only with classical schemes today risks sensitive data being decrypted later. That is what quantum computer security is about.

Important: Attackers can capture data today and decrypt it later (harvest now, decrypt later).

For data that must stay confidential for a long time (e.g. 10+ years), early PQC migration is critical.

Note: This article stays technical but is deliberately practical for developer teams in app, backend, and infrastructure projects.

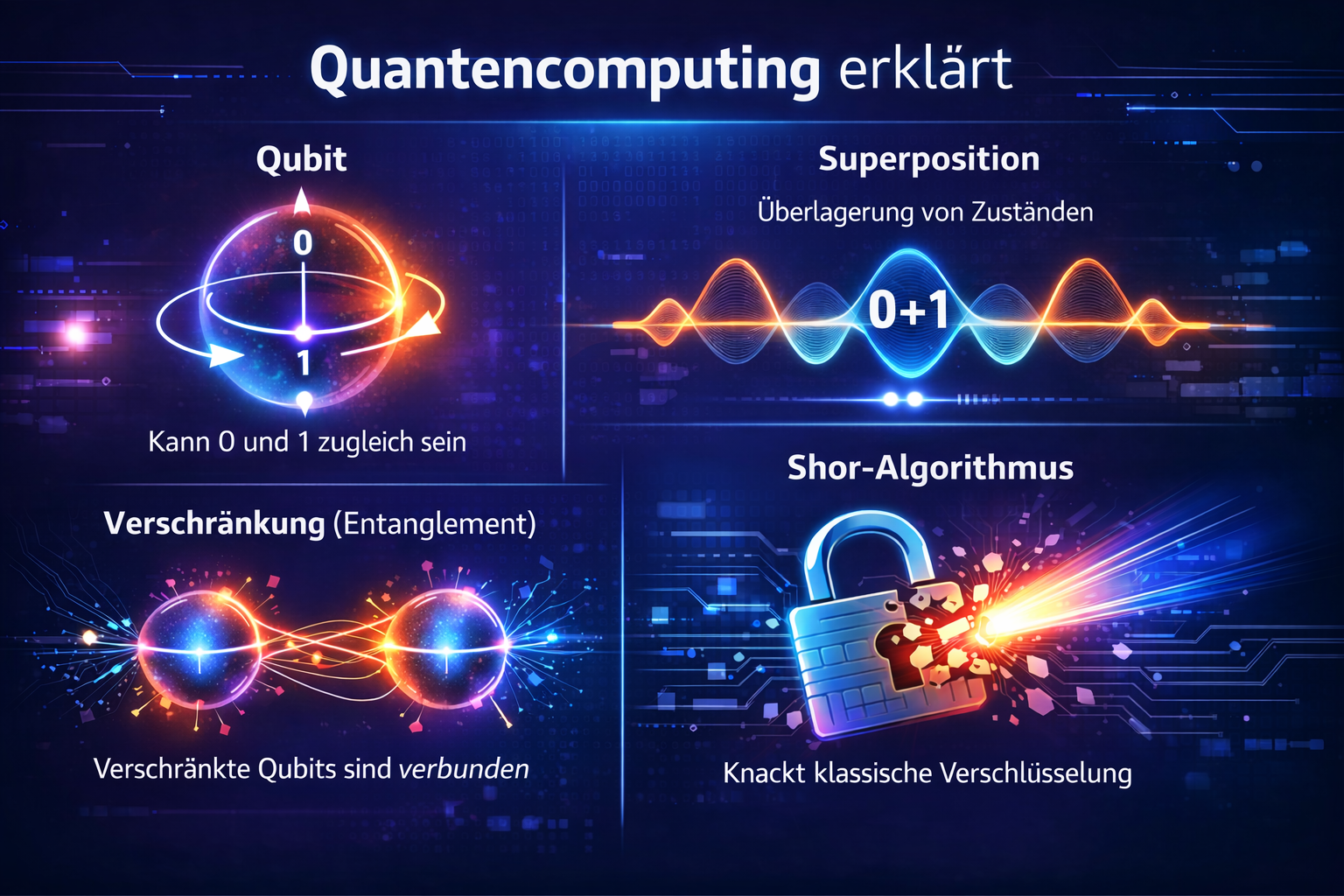

Basics: What makes quantum computers dangerous?

A quantum computer is not a classical processor with faster transistors but a physical computing system. It deliberately uses quantum-mechanical states of particles (e.g. electron spin, superconducting circuits, or photons) to process information.

Qubit: smallest unit of information; can exist in a superposition of 0 and 1.

Quantum gates: controlled operations (e.g. microwave pulses or lasers) that change qubit states in a targeted way.

Measurement: only on measurement does the state collapse to a concrete outcome (0 or 1).

Entanglement: multiple qubits can share joint states that cannot be described independently.

Computing on quantum hardware therefore means: prepare a state, transform it with a gate sequence, then measure. Many algorithms are run repeatedly to obtain a reliable result from probabilistic measurements.

Practically central is decoherence: qubits lose their information quickly due to noise. That is why error correction, low temperatures, and very precise control are needed. This engineering hurdle is the main reason powerful quantum attacks are not yet broadly available.

Quantum computers use quantum-mechanical states directly as a computing resource. A qubit can represent multiple states at once in superposition, and qubits can be coupled through entanglement.

Shor solves factorization and discrete logarithms more efficiently than classical methods. That puts RSA, DH/ECDH, and ECDSA under pressure. Grover speeds up brute-force search, reducing the effective security of symmetric keys.

Short definitions:

RSA/ECC: asymmetric cryptography for key exchange and signatures.

AES: symmetric encryption; remains robust with sufficient key length.

Rule of thumb: for long confidentiality lifetimes, use AES-256 and modern hashes today.

Why Shor’s algorithm is so dangerous

RSA security relies on the fact that splitting a large number

N = p · q into its prime factors p and q

is extremely costly for classical computers. For sufficiently large key sizes

this is not realistically feasible in practice.

Shor’s algorithm does not attack this problem merely with

more compute, but with a different mathematical strategy.

It reduces factorization to a period-finding problem.

Instead of searching for factors of N directly, you consider a function

of the form:

f(x) = a^x mod N

Here a is a randomly chosen integer coprime to N.

This function is periodic: there is a smallest

r such that the same state is reached again:

a^r ≡ 1 mod N

This r is the sought period. That is the core of the algorithm:

if this period is known, the factors of N can be computed with high probability.

Important: Shor’s algorithm does not factor large numbers directly.

It first finds a period and derives the prime factors from it.

How factors arise from the period

If the found period r is even and a^(r/2) is non-trivial,

candidates for the factors can be computed via the greatest common divisor:

gcd(a^(r/2) - 1, N)

gcd(a^(r/2) + 1, N)

If these expressions yield non-trivial divisors of N, factorization

has succeeded. This step is mathematically elegant:

from the structure of the modular exponential you obtain information about the primes directly.

Why this is classically hard

Classical factorization methods such as the number field sieve scale very poorly with key length. For RSA-2048 this leads to a practically intractable effort. The issue is not that a single step is complicated, but that the overall search space is enormous.

Finding the period is also not efficiently solvable on classical machines. This is where quantum mechanics enters: a quantum computer can process many states in superposition at once and make periodicity visible with a quantum Fourier transform (QFT).

Simplified flow of Shor’s algorithm

- Choose a number

acoprime toN. - Evaluate

f(x) = a^x mod Non many states in parallel. - Extract period information with a quantum Fourier transform.

- Reconstruct the period

rfrom the measurement outcome. - Compute non-trivial factors of

Nusingr.

Quantum Fourier transform (QFT): quantum version of the Fourier transform that makes periodic structure in a quantum state efficiently detectable.

Why this is critical for RSA and ECC

RSA relies on factorization. ECC and classical Diffie–Hellman rely on the discrete logarithm problem. Shor’s algorithm can attack both problem classes efficiently. That is what makes it dangerous: it does not merely speed up classical attacks a little—it changes the complexity class of the problem.

For developers this means: The security of RSA, ECDSA, ECDH, and similar schemes is not stable in the long run once sufficiently large, error-corrected quantum computers exist.

Key point: Shor turns a problem that is practically intractable today into one that is in principle efficiently solvable.

That is why post-quantum cryptography is necessary for public-key systems.

Putting the time horizon in perspective

There is no exact date for practical quantum attacks on large production systems. A realistic uncertainty window is about 10 to 20 years for broad relevance, with earlier partial risks in special scenarios.

- Technical progress is fast, but hardware remains difficult.

- Standards and migration paths are already defined (NIST, CNSA).

- Lead time for a safe migration is usually longer than a single release cycle.

Post-quantum cryptography: standards and selection

Post-quantum cryptography (PQC) denotes algorithms that run on classical computers and are, to current knowledge, resistant to known quantum attacks. For teams this means: prepare architecture and protocols for algorithm agility now.

| Algorithm | Type | Purpose | Order of magnitude | Recommendation |

|---|---|---|---|---|

| ML-KEM (Kyber) | KEM | Key exchange | Keys/ciphertext often ~1–2 kB | Primary candidate for hybrid TLS |

| ML-DSA (Dilithium) | Signature | Code signing, certificates | Signatures usually larger than ECDSA | Plan broadly, test early |

| Falcon | Signature | Performance-sensitive signatures | More compact but more complex | Evaluate selectively |

| SPHINCS+ | Hash-based sig. | High-assurance signature scenarios | Very large signatures/keys | Plan for special cases |

| HQC | KEM | Alternative / backup | Medium order of magnitude | Keep as reserve in the portfolio |

Values are intentionally rough; concrete parameters depend on security level and implementation.

What does this mean for classical schemes?

For classical public-key schemes the difference is dramatic: a sufficiently capable quantum computer could attack RSA-2048 or ECC not just somewhat faster but fundamentally more efficiently. NIST illustrates this vividly: what would take classical computers billions of years could shrink to hours or days for a cryptographically relevant quantum machine.

Symmetric cryptography fares better: AES is not directly broken by known quantum algorithms, but Grover’s algorithm reduces effective security to roughly the square root of the original search space. Simplified: under quantum attack AES-256 behaves like about 128-bit security and remains very strong, while AES-128 offers much less margin. That is why NIST and NSA recommend PQC for public key for long-term protection, combined with AES-256 and SHA-384 or SHA-512 for additional margin.

RSA / ECC: not future-proof against quantum attacks.

AES: still usable, but prefer AES-256 for long-term security.

Hash: SHA-256 is practical; SHA-384/512 offers more margin.

Forward secrecy: still essential to better protect old sessions.

Complexity comparison: classical vs. quantum

The decisive difference between classical and quantum-based attacks is not only speed but the complexity class of the underlying problem.

An algorithm is considered practically secure if computational cost grows very quickly with key length—typically exponentially or sub-exponentially. Today’s cryptography relies on that.

RSA factorization compared

For RSA, security rests on the difficulty of factoring a large number

N = p · q.

Classical (number field sieve):

Sub-exponential complexity:

L_N[1/3, (64/9)^(1/3)] ≈ exp((ln N)^(1/3) · (ln ln N)^(2/3))

In short: this formula describes very fast growth of effort for classical factoring. Even a slightly longer RSA key massively increases runtime.

Meaning: N is the number to factor. L_N[...] is the usual shorthand for NFS cost.

Growth is extremely fast. Small increases in key length lead to vastly higher compute cost.

Quantum (Shor’s algorithm):

Polynomial complexity:

O((ln N)^3)

In short: cost grows only polynomially with problem size. That is why Shor can in principle attack RSA/ECC far more efficiently than classical methods.

Meaning of O(...): Big-O notation describes asymptotic growth and ignores constant factors (e.g. 2× or 10× more steps).

That is the crucial difference:

- Classical: cost grows extremely fast → practically intractable

- Quantum: cost grows moderately → practically feasible

Key point:

Moving from sub-exponential to polynomial makes the attack go from “impossible” to “realistic”.

Symmetric cryptography (AES) compared

Symmetric schemes such as AES are affected not by Shor but by Grover’s algorithm.

- Classical: cost = 2n

- Quantum (Grover): cost ≈ 2n/2

Therefore:

- AES-128 → effectively ~64-bit security (risky)

- AES-256 → effectively ~128-bit security (still strong)

Interpretation:

Grover halves effective security but does not destroy it entirely.

Hash functions

Hash functions are also affected, but less critically:

- Preimage attack: 2n → 2n/2

- Collision attack remains ~2n/2

Hence:

- SHA-256 → reduced but still usable

- SHA-384 / SHA-512 → recommended for long-term security

Why this difference matters so much

Modern cryptography assumes that certain problems are not efficiently solvable.

Quantum computers change that assumption:

- Factorization → efficiently solvable

- Discrete logarithm → efficiently solvable

The mathematical basis of many deployed systems then collapses.

Conclusion:

The difference is not “faster hardware” but a fundamentally different algorithmic efficiency.

Why lattice-based cryptography is considered quantum-resistant

Modern PQC schemes such as Kyber (ML-KEM) and Dilithium (ML-DSA) are not based on factorization or discrete logarithms but on problems from linear algebra—more precisely lattices.

You can picture a lattice as a regular grid of points in high-dimensional space. Each point is a combination of basis vectors.

Core idea: computing with noisy equations

The central problem behind many PQC schemes is learning with errors (LWE).

A linear system is deliberately perturbed with noise:

b = A · s + eA→ known matrixs→ secret vector (the key)e→ random noise (small errors)

The goal is to reconstruct the secret vector s from A and b.

Why is this hard?

Without noise this would be an ordinary linear system and easy to solve. Noise makes the problem unstable:

- Small errors distort every equation

- Many solutions look plausible

- The search space becomes huge

Geometrically, this corresponds to finding a lattice point “close enough” to a noisy target.

Intuition:

You seek an exact point but only get slightly shifted clues. That inaccuracy makes the problem extremely hard.

Why quantum computers do not help here (today)

For problems like factorization, Shor provides an efficient quantum algorithm. For lattice problems such as LWE, that is not currently the case.

The best known attacks—classical and quantum—still require very high effort.

- No known polynomial-time solution

- Attacks remain exponential or sub-exponential

- Parameters can be increased deliberately

Key point:

Lattice-based cryptography stays hard under quantum attack because there is no “Shor equivalent.”

From LWE to real schemes (Kyber / Dilithium)

In practice optimized variants are used, e.g.:

- Ring-LWE (RLWE)

- Module-LWE (MLWE)

These reduce memory and improve efficiency without fundamentally changing security assumptions.

- Kyber → based on module-LWE (key exchange)

- Dilithium → also lattice-based (signatures)

Why these schemes use more data

A downside of PQC is larger payloads:

- Larger public keys

- Larger ciphertexts

- Larger signatures

Many vectors and matrices must be stored instead of single integers as in RSA or ECC.

Simple example (heavily simplified)

A minimal sketch might look like this:

# heavily simplified — illustration only

import numpy as np

A = np.random.randint(0, 100, (3,3))

s = np.random.randint(0, 10, (3,1))

e = np.random.randint(-1, 2, (3,1)) # small noise

b = A @ s + e

print("A:", A)

print("b:", b)

# Attacker sees only A and b → reconstructing s is hardImportant:

This example is heavily simplified. Real schemes use large dimensions, modular arithmetic, and carefully chosen parameters.

Why developers should bet on this

- No known efficient quantum attacks

- Standardization by NIST

- Already integrable into real systems

For developers this means: The future of public-key cryptography is very likely lattice-based.

Concrete attack scenarios with quantum computers

The quantum threat is not only theoretical. Many systems already face real risk—especially from “harvest now, decrypt later.”

Attack scenario 1: Recorded TLS connections

An attacker records encrypted connections today, e.g. HTTPS traffic.

- 2026: TLS handshake uses RSA or classical ECDH

- Attacker stores all traffic

- 2035+: quantum computer available

- → retrospective decryption becomes possible

Problem:

Security was only time-limited—not permanent.

Even if data looks safe today, confidentiality can be lost entirely in the future.

Attack scenario 2: Missing forward secrecy

Without forward secrecy the risk is even higher.

- Server uses a static RSA key

- Attacker obtains the private key later

- → all old connections can be decrypted

Forward secrecy:

Each session uses a new ephemeral key.

However: Forward secrecy does not protect against quantum attacks on the key exchange itself.

Attack scenario 3: Signatures and software updates

Digital signatures protect software updates, firmware, and container images today.

- Code is signed with ECDSA

- The public key is public

- A quantum computer computes the private key

- → attacker can forge valid signatures

Consequence:

Tampered updates can appear trustworthy.

This especially affects:

- IoT devices

- Industrial systems

- Software supply chains

Attack scenario 4: VPNs and long-lived keys

Many VPN systems use long-lived keys or certificates.

- Attacker records encrypted traffic

- Keys are broken later

- → entire communication can be reconstructed

Especially critical:

- Government communication

- Trade secrets

- Medical data

Attack scenario 5: PKI infrastructure

Public-key infrastructure relies on trusted certificates.

- Root CA uses RSA or ECC

- Quantum computer compromises the private key

- → entire certificate chain becomes untrustworthy

Worst case:

Attacker can issue arbitrary certificates (e.g. for HTTPS).

Why these scenarios matter already today

The critical factor is data lifetime.

- Short-lived data → low risk

- Long-term confidential data → high risk

Examples of critical data:

Health records, contracts, industrial secrets, government communication

Conclusion for developers

The greatest danger is not tomorrow’s attack but delayed migration.

- Attackers collect data today

- Attacks happen in the future

Key point:

If data must stay protected longer than the expected time until practical quantum computers exist, PQC is already necessary today.

Performance and trade-offs of post-quantum cryptography

Post-quantum cryptography is not a simple drop-in replacement for RSA or ECC. It has real effects on performance, memory, and networking.

Size comparison: classical vs. PQC schemes

| Scheme | Public key | Signature | Comment |

|---|---|---|---|

| ECDSA | ~32 bytes | ~64 bytes | Very compact |

| Dilithium | ~1–2 kB | ~2–3 kB | Much larger |

| Kyber | ~1–2 kB | ~1–2 kB (ciphertext) | For key exchange |

Size differences directly affect network protocols and storage needs.

Factor: PQC keys are typically 10–100× larger than classical keys.

Impact on TLS and networking

Larger keys and signatures lead to:

- Larger TLS handshakes

- More network packets

- Higher connection-setup latency

Example:

- ECDSA certificate → a few hundred bytes

- Dilithium certificate → several kilobytes

This can matter especially for:

- Mobile networks

- IoT devices

- High-frequency APIs

CPU and compute cost

PQC is not always slower—but cost is distributed differently:

- Key generation → often more expensive

- Signing → moderate

- Verification → sometimes faster than classical schemes

That means:

- Servers doing many verifications may benefit partly

- Low-CPU devices can be stressed

Memory and infrastructure cost

Larger keys impose extra requirements:

- More RAM for keys and buffers

- Larger certificates in PKI systems

- More database storage

Hybrid cryptography as a transition

In practice a hybrid approach is common today:

- Classical (e.g. X25519)

- +

- PQC (e.g. Kyber)

Both keys are combined so security holds against both threat models.

Advantage: compatibility with existing systems

Downside: even larger payloads and more complex implementation

Typical practical mistakes

- Deploying PQC without performance tests

- Underestimating handshake size

- No fallback strategy

- Ignoring crypto agility

Engineering recommendations

- Always benchmark (TLS, API, load)

- Test hybrid crypto in staging first

- Measure bandwidth and latency

- Watch memory usage

Key point:

PQC is necessary for security but has real costs. Good architecture determines whether those costs stay manageable.

Threat model: who attacks you and what is realistic?

Security decisions only make sense if you know who you defend against. In the quantum context attackers differ greatly in capability, time horizon, and resources.

Attacker classes

1. Passive attacker (traffic capture)

The simplest but often underestimated adversary:

- records encrypted traffic

- does not actively interfere

- waits for future decryption capability

Risk:

“Harvest now, decrypt later”—data is collected today and decrypted later.

This attacker is already realistic today (e.g. internet backbone, nation-state actors).

2. Active attacker (MITM)

An active attacker can manipulate connections:

- performs man-in-the-middle attacks

- forces weaker algorithms

- exploits implementation bugs

With quantum computers this attacker can additionally:

- reconstruct keys

- actively decrypt connections

3. Nation-state actor (high-end adversary)

The most relevant opponent in the quantum context:

- has large compute resources

- can store data for years

- has access to future technology

Reality:

The first practical quantum attacks will very likely come from nation-state actors.

Attack targets

Not all data is equally critical. What matters is protection lifetime.

- Short term (minutes–days): low risk

- Medium term (months–years): moderate risk

- Long term (10+ years): high risk

Critical data:

Health data, contracts, industrial secrets, government communication

When is PQC really necessary?

A simple decision rule for developers:

if (protection_lifetime > time_until_quantum_computer):

deploy PQC now

else:

plan migration“Time until quantum computer” is deliberately vague—that is what makes planning hard.

Typical misconceptions

- “Quantum computers are far off” → migration takes longer than you think

- “Our data is not interesting” → often underestimated

- “Forward secrecy is enough” → does not cover all scenarios

Risk assessment in practice

A realistic model combines several factors:

- Data value

- Protection lifetime

- Attacker capability

- System exposure (internet, internal network, IoT)

That yields:

- High risk: deploy PQC / hybrid immediately

- Medium risk: prepare migration

- Low risk: monitor and plan

Key point:

Security against quantum computers is not all-or-nothing; it is a matter of time, data value, and attacker.

Practical implementation: from theory to prototype

Post-quantum cryptography is not only a research topic—it is already available in libraries, test environments, and early integrations. For developers it is essential to classify the examples correctly:

- Educational examples explain the mathematical principle

- PoC examples show the API and flow

- Integration examples show how PQC fits into TLS, services, and infrastructure

Important: The following code blocks are deliberately not drop-in production solutions.

They make concepts, API behavior, integration points, and typical migration steps tangible.

Educational example: noisy linear equations as the core idea of LWE

The following code is not a real crypto library—only a simplified illustration of the math behind lattice schemes. It shows why a linear system with small noise suddenly becomes hard to reconstruct.

import numpy as np

# Educational example: heavily simplified

# A = public matrix

# s = secret vector

# e = small noise

# b = observed value

A = np.random.randint(0, 100, (3, 3))

s = np.random.randint(0, 10, (3, 1))

e = np.random.randint(-1, 2, (3, 1))

b = A @ s + e

print("Public matrix A:")

print(A)

print("Observed vector b:")

print(b)

# An attacker only knows A and b here.

# Without noise, s would be easy to compute.

# The extra noise makes reconstruction much harder.Context: This example only illustrates “structure + noise = hard problem.”

Real PQC schemes use modular arithmetic, polynomials, large dimensions, and carefully chosen parameters.

PoC: post-quantum KEM and signature in Python with liboqs

This example shows a minimal proof of concept with liboqs. It helps you understand the basic flow of a quantum-resistant key exchange and a signature:

- Generate a key pair

- Encapsulate and decapsulate a shared secret

- Sign and verify a message

Such tests are especially useful in early evaluation phases for backend services, security gateways, or internal toolchains.

import oqs

# -----------------------------

# 1) KEM / key exchange

# -----------------------------

# Goal: two parties derive a shared secret without sending it directly.

with oqs.KeyEncapsulation("Kyber768") as kem_alice:

public_key = kem_alice.generate_keypair()

# Peer encapsulates a shared secret with the public key

ciphertext, shared_secret_enc = kem_alice.encap_secret(public_key)

# Original party decapsulates the secret again

shared_secret_dec = kem_alice.decap_secret(ciphertext)

assert shared_secret_enc == shared_secret_dec

print("KEM OK: shared secrets match")

# -----------------------------

# 2) Signature / authenticity

# -----------------------------

# Goal: sign a message so authenticity and integrity can be verified.

with oqs.Signature("Dilithium5") as sig:

public_sig_key = sig.generate_keypair()

message = b"Confidential message"

signature = sig.sign(message)

valid = sig.verify(message, signature, public_sig_key)

print("Signature valid:", valid)Context: Good for local tests, API understanding, and first performance measurements.

Not shown: secure key handling, persistent storage, HSM integration, error handling, logging, and lifecycle management.

Integration example: PQC in Go with CIRCL

The same idea in Go—especially for developers working on services, gateways, or security components who want to integrate PQC early into existing backends.

package main

import (

"bytes"

"crypto/rand"

"fmt"

"github.com/cloudflare/circl/kem/kyber/kyber1024"

)

func main() {

// Generate key pair for KEM

pub, priv, err := kyber1024.GenerateKeyPair(rand.Reader)

if err != nil {

panic(err)

}

// "Sending" side encapsulates a shared secret

ct, ssEnc := pub.Encapsulate(rand.Reader)

// "Receiving" side decapsulates the shared secret

ssDec := priv.Decapsulate(ct)

if !bytes.Equal(ssEnc, ssDec) {

fmt.Println("Error: shared secrets do not match")

return

}

fmt.Println("KEM OK: shared secrets match")

}Context: Closer to real service integration than pure math demo code.

Typical next steps: benchmarking, load tests, error paths, configuration management, embedding in handshake or session logic.

Integration example: hybrid TLS in lab or staging

PQC migration rarely starts at the application layer; it often starts with transport encryption. A sensible first step is a hybrid TLS test environment where classical and post-quantum schemes are evaluated in parallel.

The following is not a full production rollout but a typical lab/staging approach:

- Check OpenSSL with PQC extensions

- Configure hybrid groups

- Test connection setup and interoperability

# List available providers

openssl list -providers

# Example: point OpenSSL config so a PQC provider (e.g. oqsprovider) loads

export OPENSSL_CONF=/etc/ssl/openssl.cnf

# Then test hybrid groups and PQ-capable TLS configs in a test environment.A minimal Python client for a first connection test might look like this:

import ssl

import socket

# Strong TLS context

context = ssl.create_default_context()

context.set_ciphers("TLS_AES_256_GCM_SHA384")

# Optional: enable hybrid group if available locally

# Note: syntax and availability depend heavily on the build

# context.set_ecdh_curve("X25519:MLKEM768")

with context.wrap_socket(socket.socket(), server_hostname="example.com") as conn:

conn.connect(("server.example.com", 8443))

conn.send(b"GET / HTTP/1.0\r\nHost: example.com\r\n\r\n")

response = conn.recv(4096)

print(response.decode(errors="ignore"))Context: Shows how to prepare a hybrid TLS evaluation technically.

Production also needs: client compatibility tests, certificate strategy, monitoring, load tests, rollback paths, and careful OpenSSL/provider version checks.

What to test in PQC code

The value of PQC prototypes comes not from merely running the library but from targeted technical tests.

- API stability: how do libraries and parameter names change?

- Performance: CPU time, RAM, and latency?

- Handshake size: how much do keys, ciphertexts, and certificates grow?

- Error paths: what happens with unsupported groups or versions?

- Interoperability: do clients, servers, proxies, and load balancers work together?

Practical rule: A good PQC prototype answers not only “does it run?” but “how does it fit our real system?”

PQC migration checklist for teams

Successful PQC migration does not start by swapping every algorithm at once but with a clean inventory and a controlled rollout plan. This checklist is for developers, architects, and infrastructure teams.

| Step | Why it matters technically | Typical artifacts | Concrete action |

|---|---|---|---|

| 1. Crypto inventory | You need to know all RSA/ECC dependencies to plan migration realistically. | TLS configs, certificates, SSH keys, signature paths, JWT/token keys | Document every crypto component by algorithm, key length, and deployment location. |

| 2. Data classification | Migration pressure depends on protection lifetime and data value. | Personal data, contracts, telemetry, backups, logs, secrets | Classify by protection lifetime and prioritize long-lived data needing 10+ years confidentiality. |

| 3. Crypto agility | Without swappable algorithms every later change is costly and error-prone. | Config files, crypto abstractions, policy layers | Make algorithms, parameters, and providers replaceable via config or clean interfaces. |

| 4. Hybrid pilot | Hybrid crypto reduces migration risk and yields early interoperability experience. | Hybrid TLS, test certificates, PQC-capable clients/servers | In staging or lab, first test X25519 + ML-KEM or classical + PQC in parallel. |

| 5. Key management | PQC affects not only algorithms but key storage, rotation, and lifecycle. | HSM, TPM, PKI, secrets management, expiry | Update key rotation, validity, backup/recovery, and HSM/TPM strategy. |

| 6. Performance and compatibility tests | Larger keys and signatures affect latency, memory, and networking. | Handshake time, packet sizes, CPU load, RAM, client compatibility | Benchmark and test PQC under load, on mobile clients, and behind proxies. |

| 7. Rollout plan | Without fallbacks and monitoring, crypto changes quickly become an operations risk. | Feature flags, deployment stages, observability, rollback paths | Define phased rollout, prepare fallbacks, monitor errors, latency, and interoperability. |

Practical rule: PQC migration is not a single ticket but a multi-step infrastructure and architecture project.

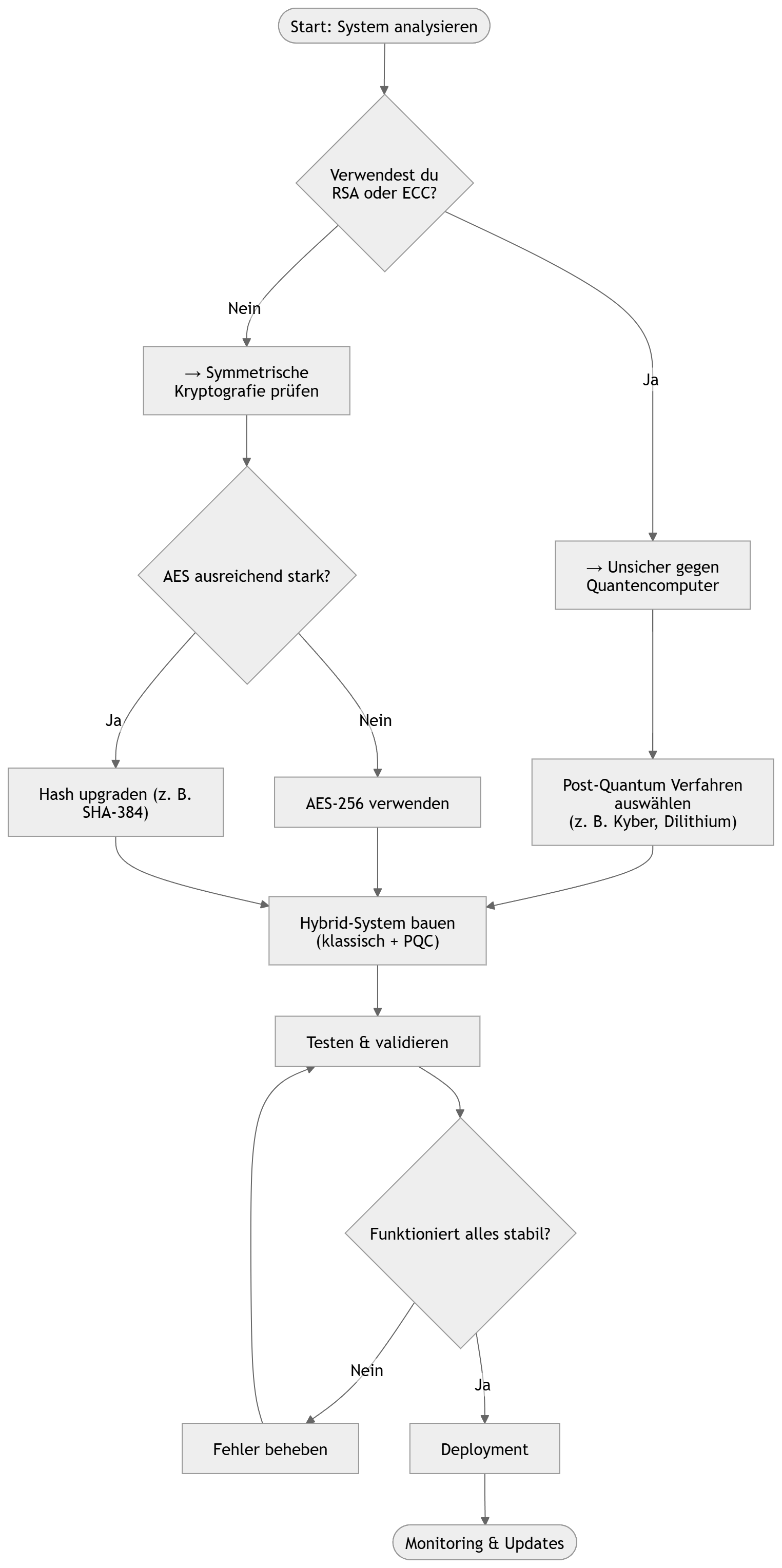

Migration flow (visual)

The typical order for a clean migration: gain visibility, prioritize risks, test hybrid schemes, then roll out in a controlled way.

How to read the flow:

Analysis: First clarify where RSA, ECC, classical certificates, or classical signatures appear in the system.

Assessment: Then decide which data and connections need immediate action—especially with long protection lifetimes.

Hardening: Raise symmetric schemes to robust parameters such as AES-256 and SHA-384/512.

Introduction: Do not roll out PQC blindly; introduce it hybridly first so classical and post-quantum schemes run in parallel.

Validation: Before production, test interoperability, performance, and error paths.

Operations: Only then a controlled rollout with monitoring, feature flags, and clear rollback paths.

What if you do nothing?

Skipping a PQC strategy means not only future cryptographic risk but also architectural and operational risk.

Confidentiality risk: Data that must stay secret long-term can be captured today and decrypted later with stronger adversaries or future quantum computers.

Operational risk: Starting migration too late creates massive time pressure across PKI, clients, gateways, APIs, certificate chains, and processes.

Architecture risk: Without crypto agility every later algorithm change is expensive, fragile, and hard to test.

The most dangerous mistake is therefore not running classical schemes today, but deferring migration until it must be done under time pressure without clean architecture.

Conclusion and next steps for developers

Quantum computers are no longer an abstract future idea but a real planning factor for cryptography in modern systems. The real danger is not that every encryption breaks tomorrow, but that today’s assumptions for public-key schemes are not stable long-term.

Technically the difference is fundamental: Classical cryptography such as RSA and ECC rests on problems that are practically intractable for classical computers. Quantum algorithms such as Shor change that assumption. At the same time, post-quantum schemes such as ML-KEM and ML-DSA show that quantum-resistant alternatives on classical hardware are implementable today—with real trade-offs in key sizes, signature sizes, network overhead, and integration effort.

For developers and architects this means: The top priority is not an immediate rebuild of all systems but building crypto agility. Making algorithms swappable, testing hybrid schemes, managing the key lifecycle cleanly, and knowing critical data flows lays the groundwork for controlled PQC migration.

Practical takeaway:

Public-key cryptography must be replaced or hybridized long-term.

Symmetric schemes remain usable but should use strong parameters such as AES-256 and SHA-384/512.

What matters is not panic but early technical preparation.

The most sensible next step is therefore clear:

- inventory existing cryptographic dependencies

- identify data that needs long-term protection

- build hybrid PQC prototypes in staging

- test performance, interoperability, and rollback paths

Starting these steps today reduces future risk and avoids the organization having to migrate later under extreme time pressure.

Sources and notes

Recommendations in this article follow public guidance from NIST, NSA/CNSA, BSI, IETF, and the Open Quantum Safe ecosystem.

License note: Code samples rely on open-source libraries (e.g. liboqs: MIT, CIRCL: BSD-3-Clause). Examples are simplified for illustration. Production use requires security reviews, HSM strategy, key lifecycle management, and regular updates.

Author: Ruedi von Kryentech

Created: 6 Apr 2026 · Last updated: 6 Apr 2026

Technical content as of the last update.