Embeddings and vector spaces in neural networks

How discrete tokens become geometric representations and why these vector spaces underpin modern LLMs

Summary: Embeddings map discrete symbols to continuous vectors. Only then can neural networks process language, categories, or other structured data mathematically.

Further reading: For architectural context, see How does a transformer work?. For the underlying neural-network stack, see Neural networks behind LLMs.

1. Why vectors at all?

Neural networks cannot work directly with words like “bank,” “voltage,” or “transformer.” They need numeric inputs. A discrete token is therefore mapped to a continuous vector. That mapping is what an embedding does.

This step is crucial: only then does language become mathematics and can be processed by linear layers, attention mechanisms, and optimization algorithms.

2. Embedding matrix and lookup

Formally, a token \(x_i\) is mapped to a vector \(e_i\):

\[ x_i \rightarrow e_i \in \mathbb{R}^{d} \]

Technically, an embedding matrix \(E\) is used:

\[ E \in \mathbb{R}^{V \times d} \]

\(V\) is the vocabulary size and \(d\) the embedding dimension. Each token corresponds to one row in this matrix. The embedding itself is then simply a lookup:

\[ e_i = E[x_i] \]

This seemingly simple operation is in fact a trainable projection into a high-dimensional feature space.

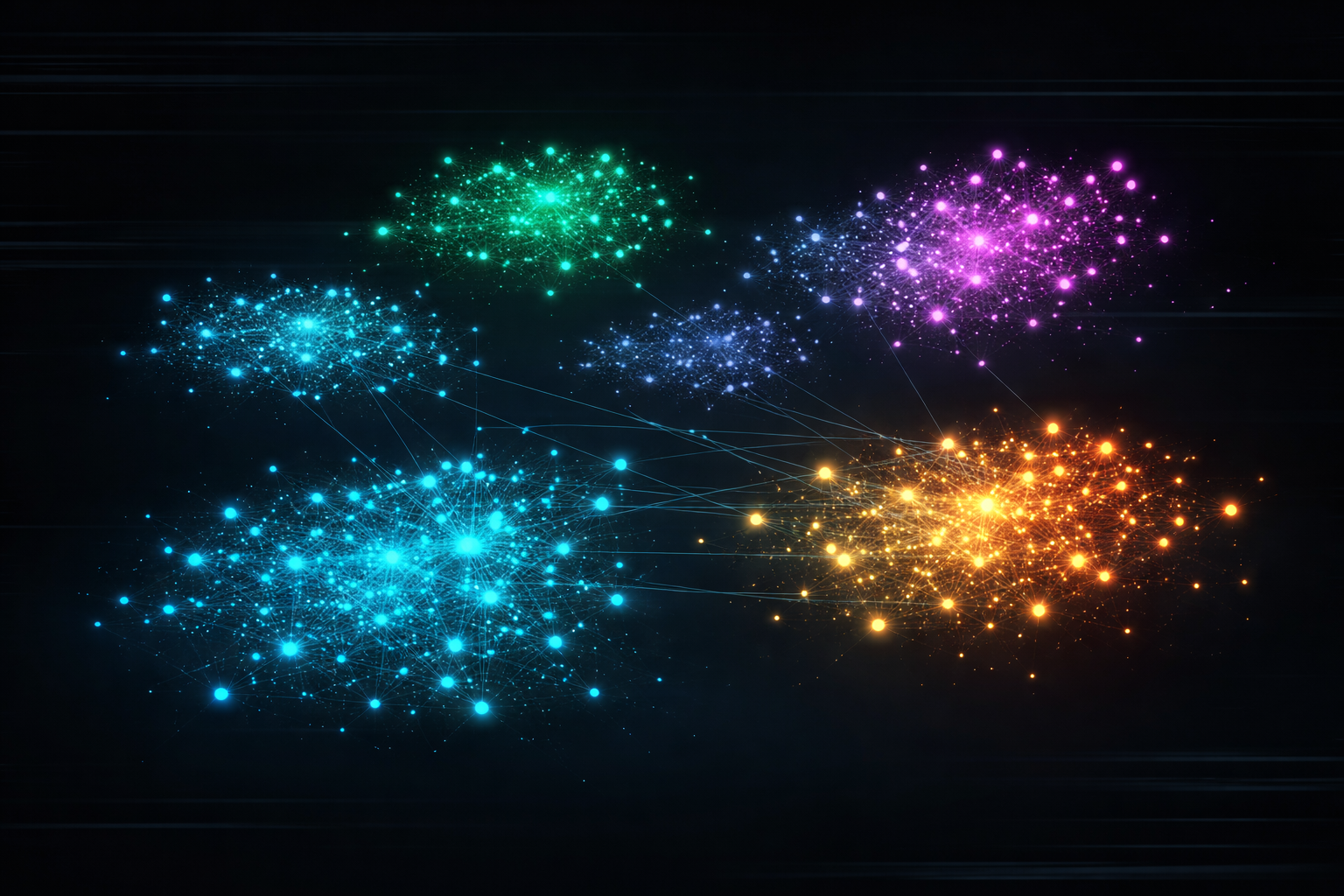

3. Geometric meaning

The central idea is: meaning is translated into geometry. Similar terms lie closer together in vector space than dissimilar ones.

A typical similarity measure is cosine similarity:

\[ \mathrm{sim}(a,b)=\frac{a\cdot b}{\lVert a\rVert \lVert b\rVert} \]

This makes not only distance but also direction in space matter. For many semantic tasks, that direction is more informative than the absolute length of a vector.

Famous examples such as

\[ \text{King} - \text{Man} + \text{Woman} \approx \text{Queen} \]

show that certain semantic relationships can actually appear as directions in space. That is not magic but a consequence of the learned geometry.

Importantly, this structure is not hand-coded. It emerges as a by-product of the training objective. That is what makes embeddings powerful: the network shapes a space in which semantically meaningful neighborhoods and directions arise.

4. Static vs. context-dependent embeddings

Earlier methods like Word2Vec or GloVe assign each word exactly one fixed vector. That is elegant but problematic for ambiguity. The word “bank” has different meanings depending on context.

Modern transformers therefore produce context-dependent representations:

\[ h_i = f(x_1, x_2, \ldots, x_n) \]

That means: the representation at a position depends on the entire sequence. A token is no longer only a fixed point in space but a dynamic state.

For LLMs this is central: language is full of ambiguity, references, and context shifts. A static vector per word would often be too coarse. Only context-dependent representations enable the precision of modern transformer models.

5. Positional information

Embeddings alone encode no order. Positional information must therefore be added explicitly:

\[ z_i = e_i + p_i \]

\(p_i\) can be sinusoidal, rotary, or encoded in another way. For the model this is essential because “voltage measures current” and “current measures voltage” contain the same tokens but a different order.

6. Why so many dimensions?

Typical embeddings have 768, 1024, 2048, or even more dimensions. That can seem excessive at first. In reality, language is highly complex and must represent many factors at once:

- grammatical role,

- semantic meaning,

- style and register,

- domain-specific technical content,

- context and position.

High dimensionality provides degrees of freedom. You can picture a large feature space in which different properties are spread across different axis combinations.

7. How embeddings are learned

Embeddings are not hand-designed separately; they are trained jointly with the rest of the network. When the model predicts the next token, gradients flow all the way back into the embedding matrix.

Thus the vectors learn exactly the structure that helps the overall task. Embeddings are not an isolated module but an integral part of the trained system.

In large language models, embeddings are often trained end-to-end with all other parameters. Semantic geometry, attention behavior, and the output distribution become tightly coupled. That is also why embeddings should never be understood in isolation but always in the context of the full neural network.

8. Applications and limitations

Embeddings are used not only in LLMs but also for:

- similarity search,

- retrieval systems,

- clustering,

- recommendation systems,

- multimodal models with text, image, and audio.

An important limitation remains: embeddings are distributed representations and hard to interpret directly. A single scalar usually has no clear meaning. What matters is almost always the pattern across many dimensions.

In addition, embeddings can inherit systematic biases from the training data. Proximity in space therefore does not automatically mean “truth”; it reflects statistical patterns in the training material.

9. Engineering perspective

Engineering perspective: Embeddings can be read as a state transformation from discrete symbols into a continuous signal space.

From an electrical-engineering viewpoint this is very familiar: a system often does not operate directly on raw symbols but on transformed state quantities or feature vectors. Embeddings play exactly that role.

The notion of a feature space is also familiar to engineers from signal processing, state-space models, and statistical pattern recognition. What is new in LLMs is less the basic principle than the enormous scale and dynamic context dependence.

10. Conclusion

Embeddings are the foundation of modern language models. They turn discrete tokens into vectors, make semantic relationships geometrically tangible, and provide the starting point for all further processing in the neural network.

Without embeddings there would be no attention, no context-dependent representations, and ultimately no capable LLMs in their present form.

If you want to see how these vectors are processed further in the model, read How does a transformer work?. For the big picture tying together embeddings, weights, activations, and training, see Neural networks behind LLMs.

Author: Ruedi von Kryentech

Created: 14 Apr 2026 · Last updated: 14 Apr 2026

Technical content as of the last update.